Scaling LLMs for Science and Ongoing Collaborations

2023-08-17

2023-08-17 @ Data-Intensive Computing & AI/ML Apps @ Scale

Loooooooooong Sequence Lengths

- Working with Microsoft DeepSpeed team to enable longer sequence lengths (context windows) for LLMs

\hspace{30pt}

SEQ_LEN for both 25B and 33B models [WIP] saforem2/{scaling4science, Megatron-DS-Benchmarking} \hspace{40pt} microsoft/DeepSpeed-Megatron

Ongoing Work & Collaborations

Scaling LLMs

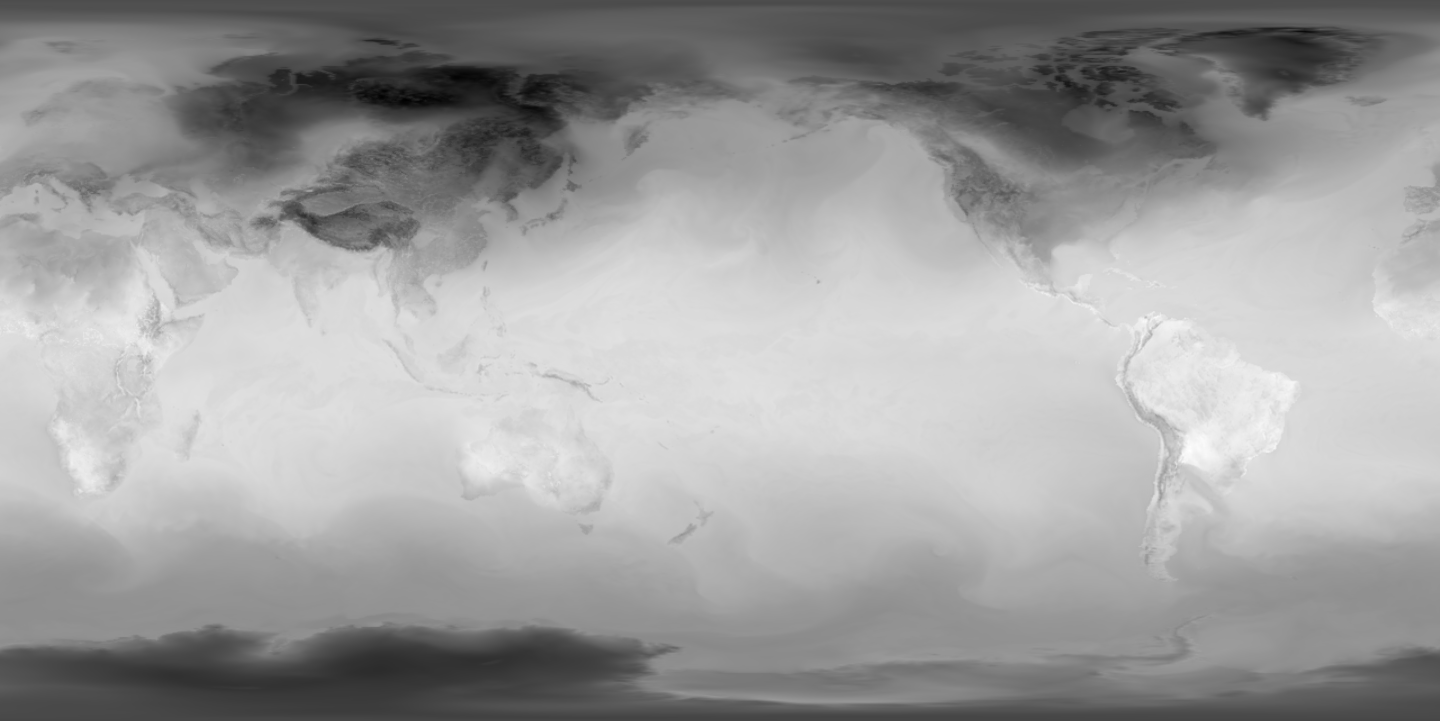

Climate Modeling

ViT for Climate Models [WIP]

Thank you!

Huge shout out to

- Venkat Vishwanath

- James Osborn

- Xiao-Yong Jin

- Rao Kotamarthi

- Romit Maulik

- Troy Arcomano

- Microsoft DeepSpeed Team

- ALCF Data Science Team (everyone!)

- ALCF Staff (Ops, Performance, Software, User Support / Documentation, …)

Acknowledgements

This research used resources of the Argonne Leadership Computing Facility,

which is a DOE Office of Science User Facility supported under Contract DE-AC02-06CH11357.